Application Modernization at Speed and Scale

Enterprises are pursuing greater application scalability, cost efficiency, and standardization with containerization and virtualization platforms. So, what’s the difference? Containers are a type of virtualization technology that allows users to run multiple operating systems inside a single instance of an OS. They are lightweight and portable, making them ideal for running applications across different platforms.

Virtualization is when a single physical machine runs multiple virtual machines within its hardware. While both options are designed to allow development teams to deploy software faster and more efficiently, they serve different purposes. In the following article, we will look more closely at containers and virtualization so you can decide which is right for your business.

What is Virtualization?

The cloud is a multi-tenant environment where multiple people run services on the same server hardware. To achieve a shared environment, cloud providers use virtualization technology.

Virtualization is achieved using a hypervisor, which splits CPU, RAM, and storage resources between multiple virtual machines (VMs). Each user on the hypervisor gets their own operating system environment.

It should be noted that none of the individual VMs interact with one another, but they all benefit from the same hardware. This means cloud platforms like AWS can maximize resource utilization per server with multiple tenants, enabling lower prices for enterprises through economies of scale.

What is Containerization?

Containerization is a form of virtualization. Virtualization aims to run multiple OS instances on a single server, whereas containerization runs a single OS instance, with multiple user spaces to isolate processes from one another. This means containerization makes sense for one AWS cloud user that plans to run multiple processes simultaneously.

How is Containerization Related to Microservices?

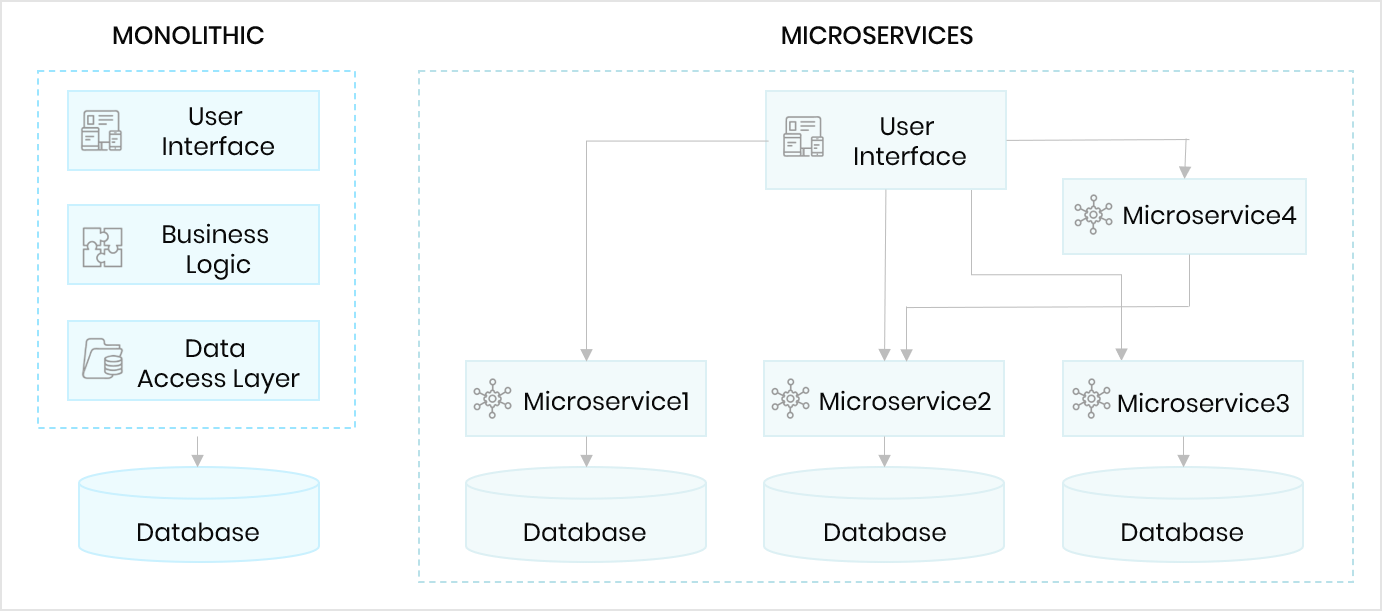

Microservice architectures involve decoupling the main components of an application into singular, isolated components. Since the components can operate independently of one another, it reduces the risk of errors or complete service outages.

A container holds a single function for a specific task, or a microservice. By splitting each individual application function into a container, microservices improve enterprise service resilience and scalability.

Containerization also allows single application components to be updated in isolation, without affecting the rest of the technology stack. This ensures that security and feature updates are applied rapidly, with minimal disruption to overall operations.

7 Differences Between Virtualization and Containerization

At a technical level, both environments use similar properties while having different outcomes. Here are the primary differences between the two techniques.

Isolation

Virtualization results in a fully isolated OS and VM instance, while containerization isolates the host operating system machine and containers from one another. However, all containers are at risk if an attacker controls the host.

Different Operating Systems

Virtualization can host more than one complete operating system, each with its own kernel, whereas containerization runs all containers via user mode on one OS.

Guest Support

Virtualization allows for a range of operating systems to be used on the same server or machine. On the other hand, containerization is reliant on the host OS, meaning Linux containers cannot be run on Windows and vice-versa.

Deployment

Virtualization means each virtual machine has its own hypervisor. With containerization, either Docker is used to deploy an individual container, or Kubernetes is used to orchestrate multiple containers across multiple systems.

Persistent Virtual Storage

Virtualization assigns a virtual hard disk (VHD) to each individual virtual machine, or a server message block (SMB) if shared storage is used across multiple servers. With containerization, the local hard disk is used for storage per node, with SMB for shared storage across multiple nodes.

Virtual Load Balancing

Virtualization means failover clusters are used to run VMs with load balancing support. Since containerization uses orchestration via Docker or Kubernetes to start and stop containers, it maximizes resource utilization. However, decommissioning for load balancing with containerization occurs when limits on available resources are reached.

Virtualized Networking

Virtualization uses virtual network adaptors (VNA) to facilitate networking, running through a master network interface card (NIC). With containerization, the VNA is split into multiple isolated views for lightweight network virtualization.

What Are the Benefits and Disadvantages of Virtualization?

What Are the Benefits of Virtualization?

Virtualization can increase application scalability while simultaneously reducing expenses. Here are five more ways virtualization can help your business:

- More efficient resource utilization via multi-tenant support on hardware.

- High availability by spooling a virtualized resource immediately and decommission once processes complete.

- Greater business continuity with easy virtual instance recovery via duplication and backups.

- Virtual machines can be quickly deployed, as the underlying OS and dependencies are already loaded on the hypervisor.

- Cloud portability is enhanced thanks to virtualization, leading to easier multi-cloud migrations.

What Are the Disadvantages of Virtualization?

While virtualization does offer the ability to run multiple applications on a single physical server, it can also hinder performance. Here are six more considerations when deciding if virtualization is right for your business:

- The return on investment (ROI) with virtualization can take years, meaning higher upfront costs but lower overall day-to-day costs.

- Public cloud virtual instances can have a risk of data loss or breach, due to multi-tenant infrastructure and the possibility of data or kernel leaks to other users.

- Scaling can take a long time for multiple virtualized instances, where velocity is key.

- Hypervisor technologies always come with a performance overhead, meaning less performance with an equal number of resources.

- Virtual servers containing virtualized instances can sprawl endlessly, creating additional management burdens for the IT department if not monitored.

What Are the Benefits and Disadvantages of Containerization?

What Are the Benefits of Containerization?

The platform-agnostic nature of containerization makes it an appealing solution for scaling cloud-based applications. Here are three more benefits to help you decide if containerization is right for you:

- Containers are lightweight and fast to deploy. Compared to virtualization, where each instance may be gigabytes (GB) in size, containers can be mere megabytes (MB) in size.

- Thanks to dependencies, libraries, binaries, and configuration files being bundled together, containers can be redeployed as needed to any platform or environment.

- The lightweight nature of containers can lead to meaningful operational and developmental cost reductions.

What Are the Disadvantages of Containerization?

While containerization offers scalability and agility when modernizing applications in the cloud, it also has serval drawbacks. Here are five disadvantages of containerization:

- Containerization is well-supported on Linux-based distributions, but Windows support is not truly adequate for enterprise use. This limits users to Linux in most use cases.

- Kernel vulnerabilities mean every container in a K8S cluster can be compromised, not just an isolated few.

- Networking is difficult as each container is running on a single server. This would require a network bridge or a macvlan driver (combination of MAC addresses and virtual local area network) to map container network interfaces to host interfaces.

- Monitoring hundreds of containers containing individual processes is more difficult than monitoring multiple processes on a single virtual machine instance.

- Containerization does not always benefit workloads and can sometimes result in worse performance.

Need Help with Containerization?

At Trianz, our containerization services leverage a toolbox consisting of reusable frameworks, deployment templates, and automations designed to generate speed and efficiency at scale.

For migrating and modernizing applications, our tried and tested approach to deploying a self-sufficient team has repeatedly proven to deliver custom software solutions, custom application development, data management, integration, and software advisory services on time and on budget.

Are you looking to modernize legacy applications?

Trianz and AWS can help re-platform legacy applications to modern AWS-managed containers in less than half the time of traditional engineering practices. Click the link below to learn more about how Trianz can accelerate your next application modernization initiative.

Learn More About AWS Application ModernizationExperience the Trianz Difference

Trianz enables digital transformations through effective strategies and excellence in execution. Collaborating with business and technology leaders, we help formulate and execute operational strategies to achieve intended business outcomes by bringing the best of consulting, technology experiences and execution models.

Powered by knowledge, research, and perspectives, we enable clients to transform their business ecosystems and achieve superior performance by leveraging infrastructure, cloud, analytics, digital, and security paradigms. Reach out to get in touch or learn more.