What is a Hybrid Data Center?

A hybrid data center is a computing environment that combines on-premise and cloud-based infrastructure to enable the sharing of applications and data across physical data centers and multi-cloud environments. This allows organizations to balance the security provided by on-premise infrastructure and the agility found with a public cloud environment.

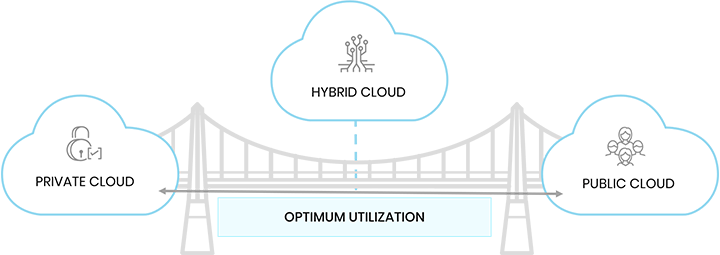

Public, Private, or Hybrid Data Center?

Whether you are looking to implement a public, private, or hybrid data center, it is important to understand each option, its advantages, and which model will support your current demands and long-term goals.

Private Cloud

A private cloud is owned and operated by an organization. A private cloud is ideal for large organizations with stringent governance and security standards.

Public Cloud

A public cloud is owned and operated by a third-party service provider. With a public cloud, a server shares resources among many different organizations. This makes it ideal for small businesses or companies just getting into cloud computing.

Hybrid Data Center

A hybrid model combines the security benefits of a physical data center with the flexibility of a multi-cloud environment (private, public, and hybrid cloud). This model is ideal for companies that need to distribute traffic between multiple clouds to handle increases in server demands, while remaining compliant with industry and regulatory requirements.

Advantages of a Hybrid Data Center

Flexibility

With a hybrid data center, organizations have the option of diversifying their infrastructure across multiple cloud platforms. This offers the flexibility to choose between "best-in-breed" hardware solutions and the most high performance and cost-effective solutions provided in the cloud.

Security

With an accredited security-focused solution for data storage, you can ensure data security while experiencing minimal expenditure on IT operations in the cloud. Due to the cloud-agnostic approach of a hybrid data center, you can use technologies like single-sign-on (SSO) to omit the need for usernames and passwords, thus increasing account security and accessibility on your network.

Scalability and Control

When retaining on-premise infrastructure with a hybrid data center, organizations mitigate the risks associated with transferring sensitive data over to a third party. This ensures security compliance while providing the ability to offload less sensitive tasks to the cloud.

Reduced Costs

For many businesses, retaining only on-premise data centers will become too cost-prohibitive over time. The burden of server maintenance lies with your IT department, requiring the hiring of IT engineers to do the work. When organizations lack scalability during unexpected peaks in traffic, it reduces operational agility and increases costs associated with hardware upgrades.

Why Companies Are Making the Switch to Hybrid Data Centers

As businesses increasingly aim to drive innovation around application portfolios, hybrid data centers provide the scale, capacity, power, and connectivity required to turn objectives into measurable outcomes.

The most common reason for this switch is that companies often have an application portfolio that requires the use of both public and private cloud platforms. A combination of on-premises infrastructure and public cloud simplifies the management of applications present in multiple locations, allowing organizations to execute multiple workload configurations simultaneously.

The single platform also enables companies to increase the deployment flexibility of individual solutions. For instance, at various stages of the software development lifecycle (SDLC), a hybrid infrastructure can better prepare organizations for fluctuations in traffic levels by adjusting for storage and configurations on demand. Besides enhancing the digital strategy with minimized costs, this ability to port applications and workloads from one cloud platform to another makes hybrid cloud a more desirable option.

Using IoT and APIs in Tandem with a Hybrid Data Center

As the Internet of Things (IoT) drives the interconnectivity of devices, applications programming interfaces (APIs) serve as valuable data receptors that act as a gateway to valuable company information. While public clouds play a significant role by providing scalability and cost-efficiency for developing artificial intelligence (AI), machine learning, and Big Data applications, it is not always the right model to use.

Since APIs distribute sensitive data to various stakeholders, company policy and compliance regulations often require a significant portion of data to remain on the corporate network. That is why Using IoT in tandem with a hybrid cloud is essential for assuring the security and validity of both internal and external APIs.

To do this, organizations need to develop a secure and unified approach to IoT and API management. It is essential to enable a self-hosted API management gateway that supports hybrid and multi-cloud environments.

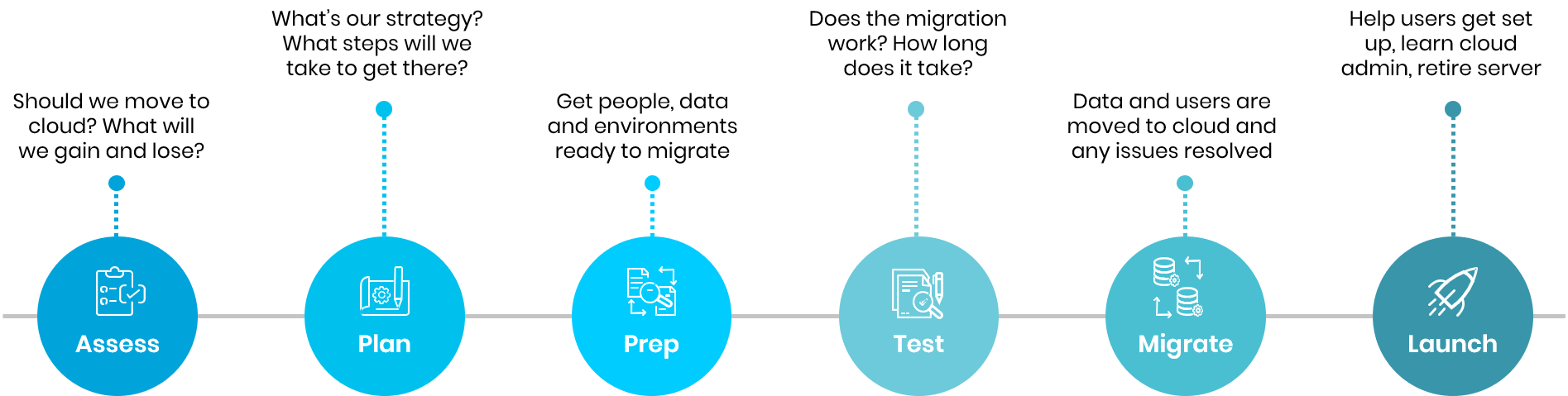

How to Move into a Hybrid Data Center Environment

It is never a simple process for enterprises to migrate their in-house systems to a cloud environment. Attempting to migrate dozens or even hundreds of different systems can lead to production outages and data loss issues concerning the decommissioning of existing systems.

For most companies that decide to move their infrastructure to a cloud solution, there is a transitional period where some systems continue in-house while others migrate to the cloud. This hybrid solution can go on for months, if not years, depending on the scope of the migration.

While the hybrid data center offers your organization incredible flexibility, without proper management of this data you risk putting your company's data assets at risk. There needs to be a plan in place that enables you to harness a hybrid data center's power to protect the company's security and reputation.

A well-designed data roadmap prepares organizations for a future that anticipates change and be prepared to embrace that disruption. Creating a schedule of which systems will be migrated and at what times will help with appropriate planning. This will also be an essential tool for coordinating with various teams that rely on these systems.

What will existing engineers do?

With a hybrid data center, data protection primarily rests in the hands of the enterprise. This means business and IT teams need to have a clear understanding of what data exists, where it resides, how sensitive it is, and who needs to access it.

Since cloud infrastructure does not require as many engineers to support the in-house infrastructure, businesses must decide what engineering teams will manage the physical data center going forward. Once these responsibilities are established, there are generally two options:

Adjust IT responsibilities

Allowing these IT professionals to transition from a maintenance and support role to something more innovative is often the ideal solution. Engineers who have detailed knowledge of how systems work can be an invaluable resource when they can focus on process and system improvement — rather than managing the day-to-day maintenance and support of the infrastructure.

Focus on continuous migration progress

When working on larger-scale data center migrations to the cloud, one challenge which is often apparent is teams getting comfortable with slow or no progress on the project. While operating a hybrid infrastructure can be a good option to increase data availability, it should never be something that a company settles into haphazardly.

As cloud migration starts, the technical teams involved need to keep pushing milestones forward according to the established schedule. In the event of any delay, the schedule should be immediately updated to ensure the digital transformation does not lose momentum.

Once teams have a firm understanding of responsibilities and data visibility, businesses can safely transition to a hybrid cloud environment with as little disruption as possible.

Using IoT and APIs in Tandem with a Hybrid Data Center

As the Internet of Things (IoT) drives the interconnectivity of devices, applications programming interfaces (APIs) serve as valuable data receptors that act as a gateway to valuable company information. While public clouds play a significant role by providing scalability and cost-efficiency for developing artificial intelligence (AI), machine learning, and Big Data applications, it is not always the right model to use.

Since APIs distribute sensitive data to various stakeholders, company policy and compliance regulations often require a significant portion of data to remain on the corporate network. That is why Using IoT in tandem with a hybrid cloud is essential for assuring the security and validity of both internal and external APIs.

To do this, organizations need to develop a secure and unified approach to IoT and API management. It is essential to enable a self-hosted API management gateway that supports hybrid and multi-cloud environments.

Trianz' Approach to Implementing a Hybrid Data Center Architecture

For a sizeable IT operation, we take a four-step approach. For each step in this approach, Trianz uses data on cloud adoptions, pre-developed templates, analytical tools, and our intellectual properties (IPs). This ensures that the migration process is data-driven and accelerated.

For your data migration to a multi-cloud environment, we begin with an assessment to gain a nuanced understanding of your enterprise's infrastructure and cloud security readiness. We then work with you to develop a strategy, which covers:

- Workload discovery and cataloging; re-host, replace, rearchitect, refactor, rebuilt, and retire/retain categorization

- Financial planning and ROI analysis

- Data governance standards

- Platform selection, architecture, and design

- Cloud security assessment

- Migration prioritization and sequencing

After the strategy is in place, we move on to tooling, automation, and testing. This is followed by executing the platform migration just prior to the final migration launch.

In addition, during the migration process, we use Trianz EVOVE, our proprietary methodology powered by CompilerWorks. EVOVE utilizes high levels of automation and reusable components to drive accelerated and high-accuracy migrations of legacy data platforms to a modern hybrid architecture.

Experience the Trianz Difference

Trianz simplifies the digital evolution of companies from strategy through execution. With a unique, multi-disciplinary and collaborative model, Trianz works with clients to transition to new business models, digitalized processes and deliver great experiences utilizing analytics, digital, cloud, infrastructure, and cybersecurity technologies. Leveraging a portfolio of digital platforms covering digital workplaces, cloud and infrastructure, and analytics, Trianz helps clients accelerate their transformations.

Trianz' portfolio of technologies and services have been rated #1 by a Fortune 1000 client base for five years in a row. Trianz has been ranked as one of the best Consulting Firms by Forbes and recently certified as a Great Place to Work.

No matter the size or scope of your IT requirements, our team of cloud consultants will be happy to provide a detailed roadmap to help you get the best solution for your business. Reach out or get in touch to learn more about our hybrid data center solutions.